Focal : An Eye-Tracking Musical Expression Controller

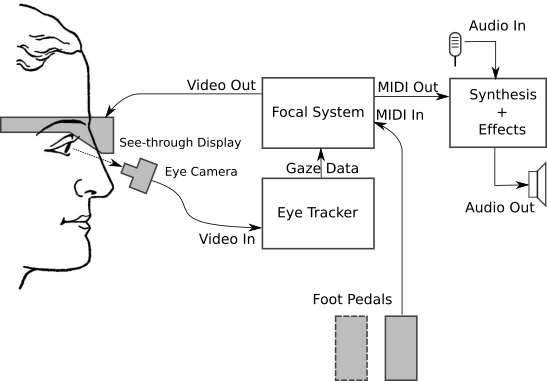

Focal is an experimental eye-tracking musical expression controller which allows hands-free control over audio effects and synthesis parameters during performance. A see-through head-mounted display projects virtual dials and switches into the visual field. The performer controls these with a single expression pedal, switching context by glancing at the object they wish to control. This simple physical interface allows for minimal physical disturbance to the instrumental musician, whilst enabling the control of many parameters otherwise only achievable with multiple foot pedalboards.

The system consists of four main parts:

- The Headset, including display and eye camera.

- The Pedal

- Eye Tracker

- Focal Application

For technical details check out our NIME 2016 Paper. Check our evaluation for more info about the video.

Motivation

Electronic effects can enhance acoustic sounds, creating new timbres and sonic textures. Effects have parameters that we may want to expressively control during performance. But if both hands are occupied playing an instrument, how do we control other aspects of the performance? The most obvious solution is to use pedals. For example:

|  |

| Keith Mcmillen 12-step. 1 octave touch-sensitive keys, useful for playing bass lines. | Roland FC300. General purpose MIDI pedal controller, with two expression pedals, and 8 digital pedals. |

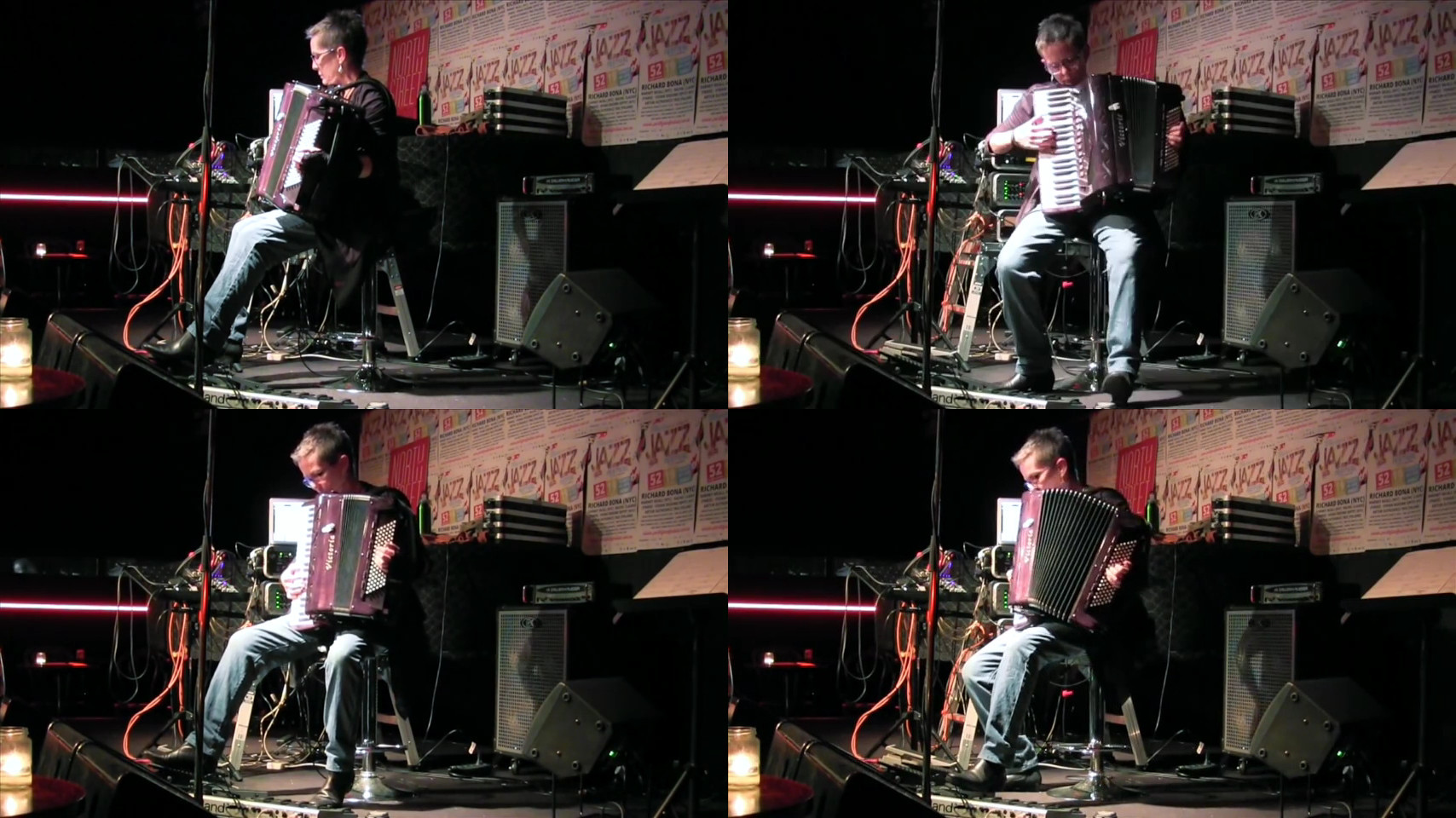

The problem is that it can sometimes be difficult to manage more than a couple of pedals. Here is Cathie Travers performing her piece "Procession" for accordion and laptop at The Ellington Jazz Club in Northbridge. Regardless of how the pedals are positioned, some fancy footwork is required. Due to the shape and position of the accordion, the player often cannot see their feet, particularly on the left hand side, so it is necessary to turn the whole body (eg. on a swivel chair) to reach all the pedals. An accordion can weigh 10-15kg, so an added challenge is to maintain posture and balance while moving the feet between pedals.

Here is a slightly different perspective of Cathie's piece Elegy #2 recorded in her studio.

It would certainly be easier if the pedals were always in reach. If we didn't have to shift our feet, what is the most control we can achieve with just two pedals?

This question drives the design ideas behind "Focal":

- Two pedals only, one per foot

- Parameters are displayed in a see-through head-mounted display (AR glasses).

- Choose a parameter to control by looking at it. Eye-tracking allows the interface to respond to our gaze

- Adjust parameter value using either pedal

- Separation of selection (gaze) and articulation (pedal)

- No need to move feet from pedals, except if we wish to adjust balance or posture

Headsets

Mark 1

This was the first headset I built, from:

This was the first headset I built, from:

- Microsoft HD-5000 web camera (~$30)

- A 4.3" reversing camera monitor (~$20)

- A welding helmet (~$30)

- Some aluminium tubing, cable ties, old meccano pieces, plastic garden stake (~$20)

This design takes some inspiration from the camera rigs used for facial motion capture. It was fairly cheap to make, around $100 in parts, and worked sufficiently well to prove the concept. Video for the heads-up display (HUD) is sent via a composite video cable.

Advantages:

- Cheap

- Super adjustable. Screen and camera can be positioned almost anywhere, allowing different viewing distances and angles to be evaluated

Disadvantages:

- Bulky, though quite light. OK for some instruments, but can collide with an accordion when looking down.

- Close position of monitor may not be suitable for people with long-sightedness or presbyopia

- Image registration is not perfectly independent of head position. Tilting the head significantly up or down causes some slight rotation, due mainly to slight flexion of the scalp.

Mark 2

This headset combines a Pupil Labs DIY

Headset with an EPSON

Moverio BT-200AV

see-through mobile viewer. HDMI video for the HUD is sent via an EPSON wireless adapter.

This headset combines a Pupil Labs DIY

Headset with an EPSON

Moverio BT-200AV

see-through mobile viewer. HDMI video for the HUD is sent via an EPSON wireless adapter.

Advantages:

- Lightweight

- Clear and bright display

- Fairly stable camera position

Disadvantages:

- Expensive. BT-200AV cost is ~AU$800. The wireless adapter appears to only be available in the Japanese market, so must be imported.

- Video lag is about 250ms, which makes the HUD difficult to use

- Video connection is flakey, often taking many attempts to establish a connection.

Mark 3

This headset combines a Pupil Labs Eye Camera and BT-200 Camera Mount with an EPSON

Moverio BT-200AV

see-through mobile viewer. The HUD is rendered by custom Android software

running on the Moverio, communicating wirelessly with a laptop which runs the

tracker and Focal interface.

This headset combines a Pupil Labs Eye Camera and BT-200 Camera Mount with an EPSON

Moverio BT-200AV

see-through mobile viewer. The HUD is rendered by custom Android software

running on the Moverio, communicating wirelessly with a laptop which runs the

tracker and Focal interface.

Advantages:

- Lightweight

- Clear and bright display

- Fairly stable camera position. This could be improved with a better nose-piece, as appears to be supplied with the new BT-300. Updated tracker uses a 3D eye model which can compensate for small amounts of camera drift.

- Robust wireless connection using ZeroMQ over WiFi. There is no perceptible lag in the HUD display.

Disadvantages:

- Expensive. BT-200AV cost is ~AU$800. See Pupil Labs Store for current pricing on eye camera and mount.

- Wireless router is required. Moverio cannot connect to ad-hoc wireless networks, and cannot be used as a wireless hotspot.

Pedals

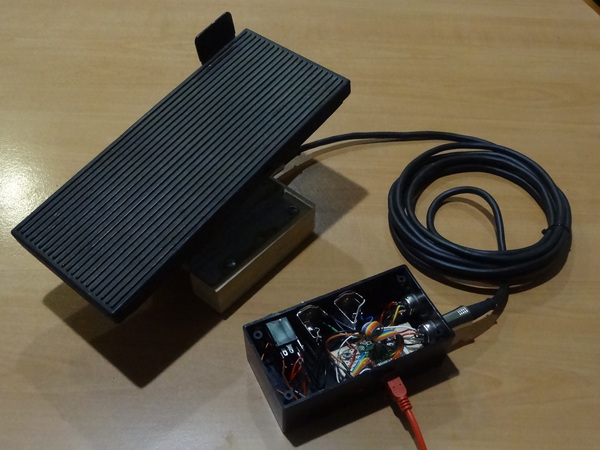

Each Focal pedal consists of two sensors:

- An analog "continuous" control

- A digital "toe switch" control

This configuration of controls is often found in the volume controls of electronic organs. The toe switch would typically be used for changing registers, or for starting/stopping a drum rhythm. In Focal the analog control is used for adjusting continuous MIDI controls. The digital "switch" can be used for digital controls, including discrete, note, and toggle controls. The switch also controls locking when operated on a continuous control.

Pedal 1

I made this pedal from the volume control of a broken electronic organ. These can often be found in verge rubbish collections. During one collection in my suburb there were no less than three organs: one was fully working, but the others had been already partly gutted. Speakers seem to be prized, as are spring reverbs. I saved the working organ, and used one of the others for parts.

I made this pedal from the volume control of a broken electronic organ. These can often be found in verge rubbish collections. During one collection in my suburb there were no less than three organs: one was fully working, but the others had been already partly gutted. Speakers seem to be prized, as are spring reverbs. I saved the working organ, and used one of the others for parts.

I built a MIDI controller using a Teensy 2, which supports up to four pedals, each with a potentiometer and up to two switches. Two of the pedals are wired with MIDI cables, which just happen to have the required 5 conductors (ground, power, analog in, switch #1, switch #2). The other two pedals are wired with discrete phono cables: mono cables for the switches, and stereo cables for the potentiometers.

Pedal 2

This is the expression pedal from my Excelsior Digisyzer, to which I fitted a 5-pin connector in parallel with the weird centronics-style connector it came with. It has two toe switches, which are labelled "GLIDE" and "FILL IN DRUM".

This is the expression pedal from my Excelsior Digisyzer, to which I fitted a 5-pin connector in parallel with the weird centronics-style connector it came with. It has two toe switches, which are labelled "GLIDE" and "FILL IN DRUM".

Pedal 3

This pedal attempts to fix some of the problems experienced during evaluation of the previous two pedals:

This pedal attempts to fix some of the problems experienced during evaluation of the previous two pedals:

- The organ-type pedals are "long throw", meaning that they have a relatively large angle of travel. This was found to result in an uncomfortable posture.

- The organ pedal mechanisms are quite loose, and thus too sensitive. This means it is difficult to move the foot on or off the pedal without disturbing its setting.

This pedal adds a new type of toe-switch to an existing pedal, which can be anything that the performer prefers. Here, we used a Boss FV-500. The switch module sits on top of the pedal and has an end stop to provide precise location for the toe. The switch is operated by slightly raising the toe, which is an easier movement than "swiping" the entire foot to the left. The pedal has a stiffer mechanism, so one can move the foot on or off the pedal without disturbing it.

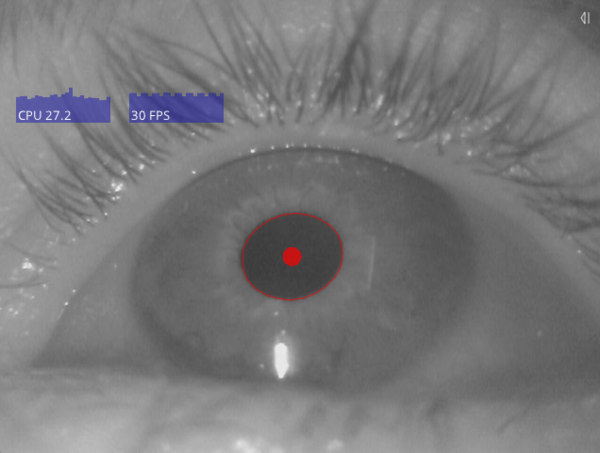

Eye Tracker

We are using the Pupil tracker, which is open source and cross platform. This is an ideal platform for prototyping and experimenting with eye tracking. The core code is written in Python, and is extensible through plugins. Image processing is coded in C++ and seems to run very efficiently. On my Macbook Air, a single eye camera at 30Hz uses less than 30% of one CPU, leaving plenty of processing power for running the UI, and even some modest sound synthesis in Abelton Live.

Pupil publishes gaze data using the ZeroMQ distributed messaging system. This allows simple, reliable communication between Pupil and user processes running on the same or different machines. Data is written in JSON format, which is widely supported across most programming languages.

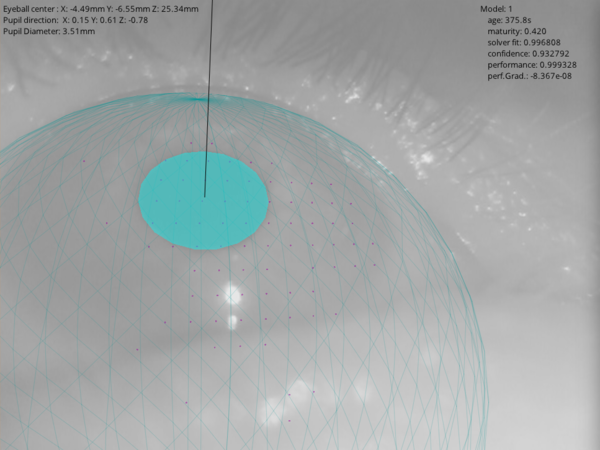

As of the 0.7.4 release, Pupil supports both 2D and 3D eye models. The 3D model uses pupil motion to infer the location of the eyeball with respect to the camera. This information can be used to compensate for camera movement over time. In addition, the 3D model is more robust to occlusions of the pupil in the image due to reflections, eyelashes, or eyelids. Future releases of the Pupil platform are expected to officially support 3D calibration and gaze mapping for head mounted displays, which should give dramatically better stability than is presently achieved by the system.

Here is a sample infra-red image from the eye camera, as displayed in the user interface of the Pupil tracker. The computed pupil location is shown as a red ellipse, and a dot indicates the centre position.

This is the debug view for the 3D eye model, showing the predicted eyeball geometry, the pupil location, and the surface normal (ie. gaze vector).

Focal Application

Focal consists of about 2K lines of Java code. This reads a definition file which describes the sensors (ie. emulated MIDI controllers) and their grouping into scenes, as well as variables such as the input and output MIDI devices, the number of pedals, and the dispersion time. Focal connects to Pupil via ZeroMQ and receives gaze positions as JSON objects. We use JeroMQ, a pure Java implementation of the ZeroMQ protocol.

Focal can generate a local HUD user interface display which can be used directly on headsets that support video input. Previously this was possible with Headset 1, which was driven via an HDMI to AV adapter. With Headset 2, the EPSON wireless adapter could be used to stream HDMI video to the Moverio headset, but this worked so poorly that it was unusable. Wireless connection was very unreliable, and introduced a lag of about 250ms which made the system unacceptably laggy. In addition, upgrading the Moverio the developer release breaks wireless streaming.

With Headset 3 I implemented the core HUD display as an Android app running on the Moverio headset. This communicates with Focal over a separate ZeroMQ connection, which outputs the control layout whenever it changes (eg. during a scene transition), and the activation state whenever this changes (eg. as controls are activated and change state). The same connection also forwards calibration messages which are output from the custom Pupil calibration procedure. This means that when Calibration is activated in Pupil, the headset automatically switches to display the calibration markers, returning to the Focal display once complete. With the 3D eye model, only five calibration points are required, so it is possible to recalibrate the system quite quickly and easily. Importantly, there is no discernible lag between Pupil, Focal and the remote display app.

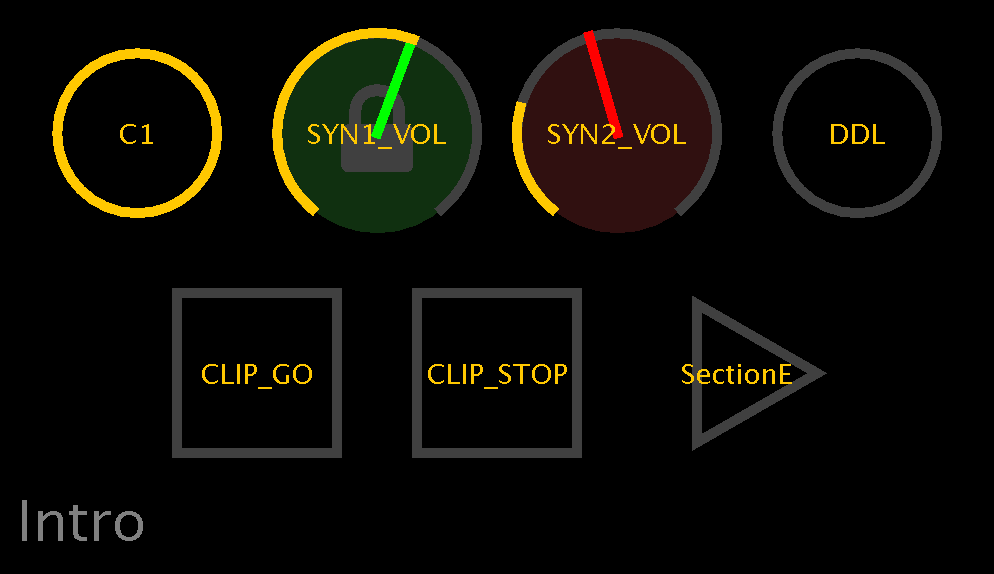

Here is an example of the HUD display. The display elements are large and have high contrast, so that they can easily be seen over the surrounding environment. Circles indicate "stateful" devices, such as continuous controllers and toggle switches. Squares indicate "stateless" devices, such as notes and triggers. The triangle indicates a "scene" control, which like a hyperlink transitions to a different scene. For more details see the NIME 2016 Paper, or the video below.

Here is an attempt to show roughly what this looks like from behind the glasses. The HUD is visible over the surrounding environment. The image is fairly clear, but there is some "ghosting" due to the LCD backlight which reveals the outline of the display. The upcoming Moverio BT-300 is expected to feature a Si-OLED display, in which black pixels will be fully transparent, resulting in a more immersive experience.

As seen here, the display is still quite visible outdoors. For very bright environments, the Moverio includes a sunglasses-like optical shade which can optionally be clipped onto the front.

Evaluation

In the last 6 months I have been playing around with various hardware and interface ideas, and the design has grown in maturity and scope. Cathie Travers has been helping to refine and test some of these ideas, by seeing how the system could be used in her pieces for accordion and electronica.

We implemented a set up for her piece "Elegy #2" for accordion and laptop. The piece uses the following digital effects hosted in Ableton Live: delay, synthesizer 1, synthesizer 2, clip launcher. Pedals used in the original setup include a Roland FC-300 MIDI foot controller, a discrete expression pedal (controlled by the FC-300), and a Keith McMillen 12-Step Controller. These are arranged around a saddle chair, which allows her to turn to reach each device. Functions mapped to the pedals include: start and stop a low C drone on both synthesizers (C3), separately control the volume of each synthesizer (SYN1_VOL, SYN2_VOL), start and stop a pre-recorded audio clip (CLIP_GO, CLIP_STOP), enable and disable a digital delay (DDL), play a bass line from a C minor scale, using 8 of the 12 notes from one octave. In a live concert there is a third continuous control pedal for the master volume output from Ableton Live, which must be balanced against the amplified live accordion volume level. There are a total of 11 digital switches and 3 continuous controls. The piece can require retriggering the clip while it is still playing, so includes separate start and stop buttons, rather than a single toggle. The Focal controls were grouped into two scenes, with the scale notes in the second scene and all other controls in the first; the delay control appears in both scenes.

Original multi-pedal system

|

"Virtualised" with Focal

| |

|

11 digital, 3 analog pedals |

2 pedals |

Here you can see a video of her original piece "Elegy #2", using a multi-pedal system.

Below is a comparison video of the performance using the Focal system. There are a couple of minor musical mistakes in the performance, but we hope it illustrates how the interface works. Like any system, proficiency requires practice. This recording is from Cathie's first session with the system, and is only the 3rd take for the piece.

Laptop #1 runs the eye tracker and Focal process, and Laptop #2 runs an Ableton Live set that includes audio effects and two synthesizers. The laptops are connected via a single MIDI cable. The video includes:

- A view of the HUD, which is projected through the headset. The eye fixations are shown as blue circles. The dispersion window period is 100ms.

- A view of the eye, input to the eye tracker

- A view of the foot pedals

- Audio output from Ableton Live